At a keynote presentation titled 'Open Source, Community, and Consequence: The Story of MongoDB,' speakers Akshat Vig and Andrew Davidson shared the journey behind MongoDB's rise as a leading database solution. The talk emphasized that the story is less about the technical database itself and more about the community movement that embraced the document model, which was a novel approach when MongoDB was founded. They highlighted the challenges, including outages and growth pains, alongside the passion and dedication of the community that helped MongoDB become a default choice for many mission-critical workloads. The speakers also shared personal reflections on their career paths and the unique culture of MongoDB as a New York-based company thriving in a tech landscape often centered in Silicon Valley. This narrative underscores the importance of community-driven innovation in the open source software ecosystem.

MongoDB Open Source Community

Sources (1)

More from Dev & Open Source

-

Top DevOps Tools 2026

research →

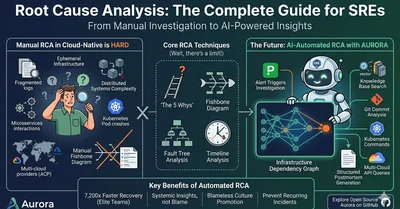

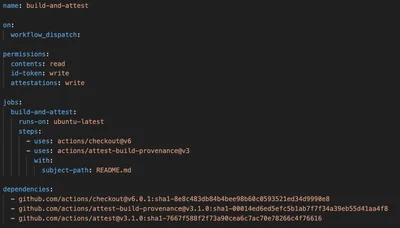

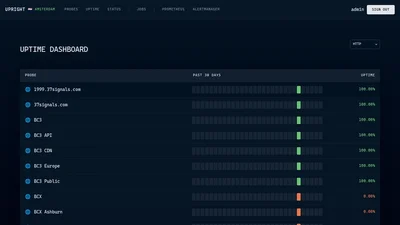

A comprehensive overview of the top DevOps tools for 2026 highlights key solutions focused on infrastructure, security, observability, and incident management, particularly for SRE teams. The list emphasizes mostly open-source tools that avoid AI chatbots, addressing practical challenges such as secrets management, CI/CD security, and root cause analysis in complex cloud environments. Notable tools include Upright for synthetic monitoring, HyperDX for unified observability, and enhanced GitHub Actions security features aimed at mitigating supply chain attacks. This curated selection reflects the evolving landscape of DevOps, where automation, security, and reliability are paramount for modern software delivery. Understanding and adopting these tools is crucial for engineering teams to maintain resilience and efficiency in increasingly distributed and automated systems.

-

Edge Computing Reality Shift

research →

The concept of edge computing is undergoing a fundamental transformation from being viewed as a mere geographical extension of cloud infrastructure to a distinct operational reality. According to Bruno Baloi's insights, the edge now represents a complex domain where physical devices and software systems interact under challenging conditions such as intermittent connectivity and high data generation rates. This shift demands a new architectural mindset, as traditional cloud-native assumptions no longer hold true in edge environments. Understanding this change is critical for engineers and organizations to design resilient, efficient systems that can operate effectively at the edge. The evolving edge paradigm highlights the importance of rethinking infrastructure strategies to meet the demands of modern distributed computing.

-

GitHub Copilot AI Coding Tools Comparison

research →

In 2026, GitHub Copilot remains the market leader among AI coding assistants, distinguished by its deep GitHub integration, multi-model AI capabilities including GPT-4o and Claude Opus 4, and a comprehensive feature set that includes code completion, chat, code review, and autonomous coding agents. It competes with various AI tools that cater to different developer needs: Tabnine focuses on privacy and on-premise deployment for enterprises, Cursor offers a fully AI-native IDE with advanced multi-file editing and agentic coding at a higher price, and Codeium provides a strong free alternative with generous usage limits. The choice between these tools depends largely on developer priorities such as budget, privacy, IDE preferences, and workflow integration. This landscape highlights the evolving AI coding ecosystem where users must balance features, cost, and deployment models to find the best fit for their coding workflow.

-

Architectural Governance with GenAI

research →

The rapid adoption of generative AI (GenAI) in software development has drastically accelerated code production, challenging traditional architectural governance and code review processes. While AI enables developers to generate large volumes of code quickly, it introduces new risks such as architectural flaws, security vulnerabilities, and fragile assumptions that are difficult to detect with conventional review methods. Researchers have documented a growing number of security vulnerabilities linked to AI-generated code, underscoring the need for enhanced oversight. To maintain system cohesion and safety at AI speed, experts advocate combining centralized architectural decision-making with automated, decentralized governance tools that enforce machine-readable architectural intent. This shift highlights that while code creation becomes cheaper and faster, the critical bottleneck remains in aligning teams on architectural vision and validating AI outputs to prevent costly failures.

-

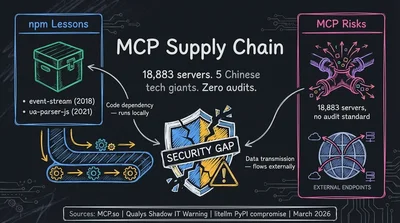

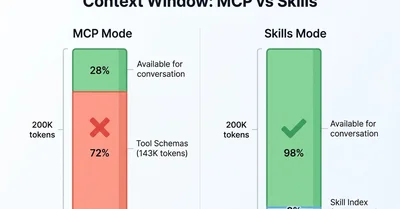

MCP Protocol Security Risks

research →

The Model Context Protocol (MCP), initially celebrated as a universal standard for AI tool integration, is facing growing criticism and security concerns. While MCP enables seamless interoperability between AI models and external tools, recent reports highlight significant risks including intellectual property leakage, supply chain vulnerabilities, and poor security auditing. The rapid adoption of MCP by major companies and Chinese tech giants has raised geopolitical and data privacy alarms, especially as MCP servers transmit data externally unlike traditional package managers. Additionally, academic research has exposed numerous attack vectors against MCP, underscoring the protocol's enlarged attack surface. These developments threaten MCP's viability as a secure infrastructure standard for AI, prompting some firms to abandon it in favor of simpler, more secure alternatives.

-

AI Discovers Critical Solana Bug

research →

In early 2026, an AI security agent autonomously identified a critical Remote Code Execution vulnerability in Solana's rBPF virtual machine, specifically in the 'Direct Mapping' optimization introduced in Solana v1.16. This flaw could have allowed attackers to execute arbitrary code on validator nodes, mint unlimited tokens, and steal validator keys, threatening the security of a blockchain network with over $9 billion in total value locked. The discovery earned the largest bug bounty ever awarded to artificial intelligence, highlighting the growing role of AI in blockchain security auditing. This breakthrough underscores the limitations of traditional memory-safe programming languages and signals a new era where AI-assisted bug hunting becomes essential for DeFi protocols' security.

-

Fedora Forge Migration

research →

The Fedora community has announced the readiness of Fedora Forge, a new collaborative development platform based on Forgejo, to replace the aging Pagure platform. This transition follows a year of preparation by the Community Linux Engineering team to modernize Fedora's infrastructure. Pagure, which has served the community for many years, is being retired due to its stagnation and high maintenance demands. Fedora teams are encouraged to migrate their projects to Fedora Forge before the Flock 2026 conference in June, after which Pagure will be archived in read-only mode. This migration marks a significant step in Fedora's broader infrastructure modernization, aiming to retire all Pagure instances by the 2027 Fedora 46 release.

-

Linux in Data Engineering

research →

Linux remains the foundational platform for modern data engineering, powering the infrastructure beneath cloud providers like AWS, GCP, and Azure. The transition from legacy GUI-based ETL tools to scalable, open-source Linux distributions such as Red Hat, CentOS, and Ubuntu has enabled the rise of distributed processing frameworks like Hadoop, Spark, Kafka, and Airflow. These tools rely heavily on Linux kernel features for efficient memory management, disk I/O, and cluster concurrency, making Linux proficiency essential for data engineers managing complex pipelines. Despite the abstraction layers in managed cloud services, understanding and working directly with Linux systems is critical for building resilient, scalable data workflows. This underscores Linux's irreplaceable role in the data engineering ecosystem and the broader cloud infrastructure landscape.