The AI landscape in 2026 is witnessing a significant shift towards open-source, privacy-focused local AI assistants that run entirely on personal hardware without relying on cloud services. Projects like OpenClaw, OpenYak, GPT4All, and Ollama are enabling users to harness powerful large language models (LLMs) and AI agents locally, ensuring data privacy and eliminating cloud costs. These tools offer capabilities ranging from office automation and data analysis to coding assistance and customizable AI workflows, addressing growing concerns over data security and cloud dependency. Despite challenges in setting up local AI stacks, the availability of user-friendly APIs and comprehensive tooling is making it increasingly feasible for developers and professionals to adopt private AI solutions. This trend not only empowers individuals and organizations with greater control over their data but also signals a broader move towards decentralized AI usage.

Open-Source Local AI Assistants

Sources (6)

More from Dev & Open Source

-

AI Developer Fatigue

research →

The widespread adoption of AI code assistants, now used by over 75% of professional developers and generating billions in revenue, has introduced a new challenge: AI fatigue among developers. While these tools initially boosted productivity and accelerated feature delivery, engineers report a cognitive overload caused by constant AI suggestions that shift their role from creator to validator. This passive assistance model leads to mental exhaustion and a loss of control and focus, as developers must continuously review AI-generated code that often lacks project-specific context, creating hidden technical debt. The phenomenon highlights the complex trade-offs in integrating AI into software development workflows, emphasizing the need for better management of AI’s impact on developer well-being and code quality.

-

Kubernetes Vulnerability Scanner False Positives

research →

In hardened Kubernetes environments, popular vulnerability scanners like Trivy and Grype often report false positives by flagging vulnerabilities that are theoretically present but practically non-exploitable due to security configurations such as readOnlyRootFilesystem and read-only volume mounts. These scanners analyze container images without considering runtime security contexts, leading to misleading vulnerability reports. This issue complicates security assessments by inflating the number of actionable vulnerabilities, potentially diverting attention from real threats. Addressing these false positives is crucial for improving the accuracy and efficiency of vulnerability management in Kubernetes deployments.

-

Modern Git Branching Strategies

research →

Git branching strategies have evolved significantly from the traditional GitFlow model, which involved multiple long-lived branches and often caused merge conflicts and slow delivery. Modern development teams, especially those working with cloud and microservices architectures, are increasingly adopting simpler workflows such as GitHub Flow and Trunk-Based Development, which emphasize faster integration and continuous delivery. Understanding these strategies is crucial for improving team productivity, reducing complexity, and enabling faster software releases. This shift reflects broader changes in software development practices that prioritize agility and collaboration.

-

ProxySQL Multi-Tier Release

research →

ProxySQL has launched a new multi-tier release strategy alongside the release of ProxySQL 3.0.6, designed to better serve its diverse user base. The strategy includes three distinct tracks: Stable for mission-critical production environments prioritizing reliability, Innovative for early access to new features, and AI/MCP for exploring advanced capabilities including AI integrations. This approach reflects ProxySQL's response to growing demands for both stability and cutting-edge technology in database infrastructure management. The move is significant as it allows users to choose a release track that best fits their operational needs, balancing innovation with stability.

-

AI Agents Sharing Debugging Knowledge

research →

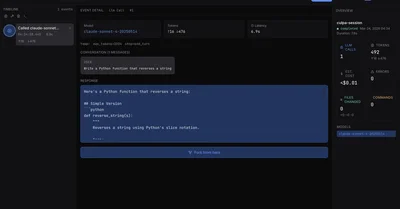

AI agents traditionally suffer from a lack of persistent memory, causing each agent to relearn solutions to errors independently, which wastes time and computational resources. To address this, a new system called Prior was developed, enabling AI agents to share debugging histories and solutions in a collective knowledge base. An experiment demonstrated that when agents access Prior, they can retrieve fixes for previously encountered errors instead of re-deriving them, significantly improving efficiency. This innovation is a key advancement in multi-agent orchestration, where coordinated AI agents collaborate and share context to solve complex problems more effectively. The breakthrough matters because it reduces redundant work, cuts costs, and accelerates AI-driven software development workflows in a market where AI coding assistants are now essential tools.

-

Self-Hosted File Servers and Artifact Management

research →

In 2026, developers increasingly rely on self-hosted file servers and robust artifact management systems to maintain control over their development workflows and infrastructure. Self-hosted file servers, ranging from traditional FTP and WebDAV to modern cloud-native solutions, offer teams cost-effective, customizable alternatives to commercial cloud storage, addressing challenges like file sharing, backup artifacts, and team collaboration. Meanwhile, artifact management tools such as Docker Registry, Nexus, and Artifactory have become foundational to modern DevOps, ensuring reliable storage and retrieval of build artifacts critical for continuous integration and deployment pipelines. The integration of these systems with developer tools on platforms like Mac enhances productivity by reducing context switching and streamlining debugging. This trend underscores a broader shift towards developer autonomy, security, and efficiency in software delivery processes.

-

Legacy Python 2 Challenges

research →

In 2026, Python 2 remains a significant challenge for developers due to its official end-of-life status since 2020 and the dwindling support ecosystem. Legacy systems, particularly those reliant on Jython which depends strictly on Python 2, face operational and regulatory constraints that prevent easy migration to Python 3 or newer technologies. This situation forces developers to maintain obsolete codebases with limited tooling and community support, complicating maintenance and security. The issue highlights broader concerns about Python's future, as its aging foundation and the rise of newer languages put pressure on its long-term relevance. Addressing these legacy challenges is crucial for organizations dependent on Python in critical environments.